Sargalay — LLM API Gateway

Full-stack LLM reselling platform for Myanmar developers. Hono + Bun backend acts as a transparent proxy to 300+ AI models with real-time usage-based billing, balance-first proxy guard, and MMPay/MMQR integration for local Myanmar payments. Includes a Next.js 15 portal with custom i18n (English + Burmese), a streaming AI assistant with search_models tool and conversation summarization, and non-blocking model price caching.

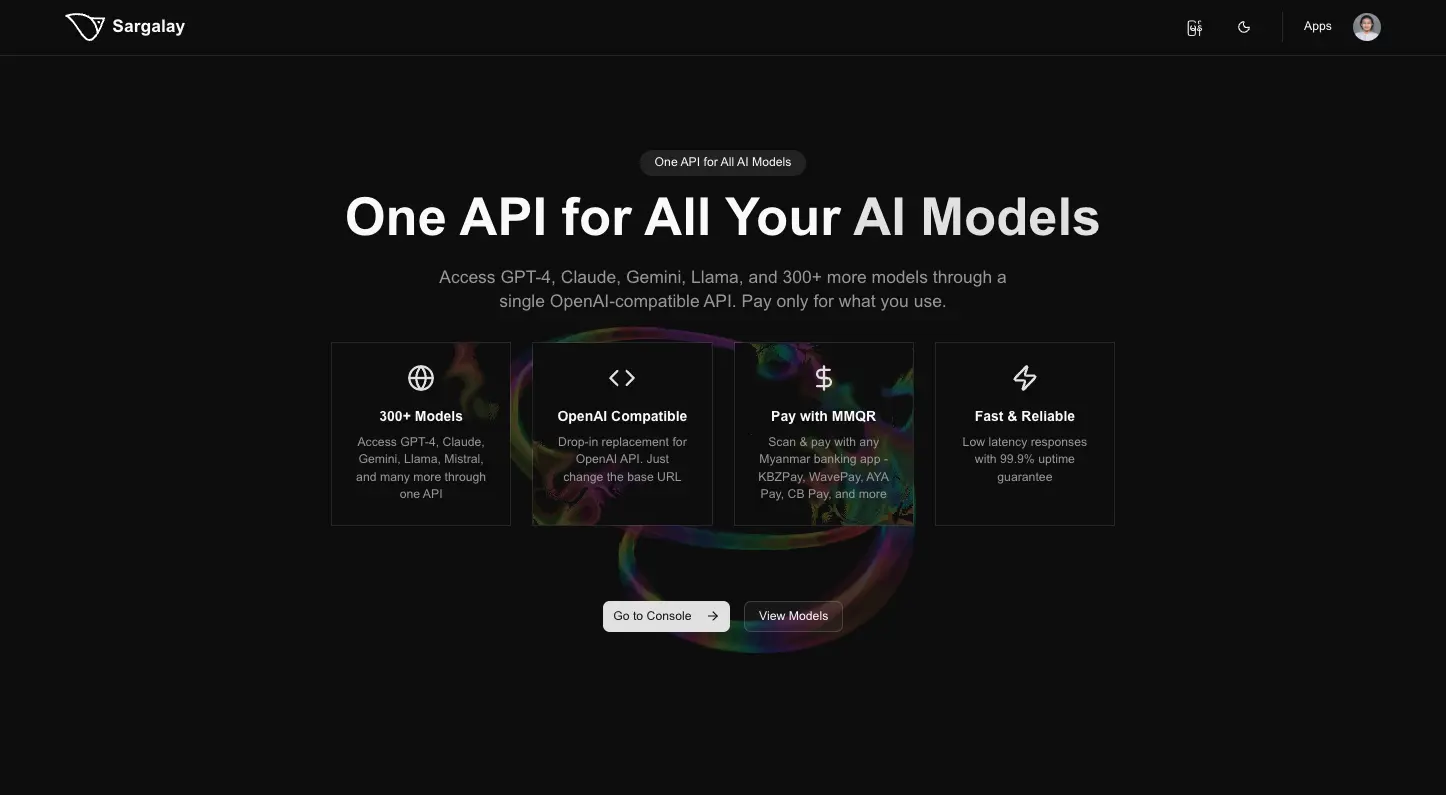

AI Sargalay — LLM API Gateway

A full-stack LLM reselling platform built for Myanmar developers, providing OpenAI-compatible access to 100+ AI models (GPT-4, Claude, Gemini, Llama) with local payment integration.

Overview

AI Sargalay makes frontier AI models accessible to Myanmar developers through a unified OpenAI-compatible API with usage-based billing and local payment support. Developers get a single API endpoint and top up their balance using any Myanmar banking app — no USD card required.

Key Features

- 100+ Models — OpenAI-compatible API access to GPT-4, Claude, Gemini, Llama, and more

- Real-Time Billing — Actual token costs are captured from upstream SSE response chunks, marked up, and deducted from user balances immediately — no estimation error

- Balance-First Guard — Balance is validated before every proxied request, preventing users from consuming credits they can't pay for

- MMPay / MMQR Payments — Top up with KBZPay, WavePay, AYA Pay, or CB Pay — any Myanmar banking app — with real-time payment confirmation via Server-Sent Events

- Model Catalog — Browseable catalog with MMK pricing, context windows, modality filters, and ISR rendering

- AI Assistant — Streaming chat assistant that knows the platform: available models, pricing, payment methods, and integration guides

Architecture

Monorepo — Separate Deployments

ai-gateway/

├── apps/

│ ├── worker/ → Cloudflare Worker (Hono RPC + better-auth, port 8787)

│ ├── web/ → Vercel (Next.js 15, public pages only)

│ └── portal/ → Cloudflare Pages (Vite + TanStack Router, user dashboard)

└── packages/

├── @ai-gateway/types — shared Zod schemas (single source of truth)

├── @ai-gateway/db — Drizzle ORM schema + D1 migrations

├── @ai-gateway/core — shared repos, use cases, auth utils

├── @ai-gateway/proxy-core — LLM proxy logic (revenue-critical)

└── @ai-gateway/ui — shared React components (shadcn/ui)

All apps share typed API contracts via Hono RPC (AppType) — the frontend client is fully type-safe with no code generation step.

Edge-First Architecture

The entire backend runs on Cloudflare Workers at the edge — zero cold starts, globally distributed. The database is Cloudflare D1 (SQLite), co-located with the worker for low-latency reads. Model pricing is cached at the CF CDN layer (5-minute TTL) so the catalog is served without hitting the database on every request.

Per-User API Keys

Each user is provisioned a dedicated API key at registration. The platform uses this key for proxying, with the upstream provider as the source of truth for balance and usage. Topup flow: fetch current limit_remaining → PATCH limit = remaining + topupUsd. This eliminates the need for local balance tracking or usage reconciliation.

SSE Payment Flow

MMQR payments are confirmed via MMPay webhook callbacks. The portal listens on a Server-Sent Events stream (GET /me/topup/:id/stream) that polls D1 every second and pushes a status event when the payment completes — the stream closes automatically on success or timeout.

AI Assistant

Streaming chat assistant built with Google Gemini 2.0 Flash:

search_modelstool — filters the live model catalog by modality, provider, or price, returning IDs resolved client-side from React Query cachebrowse_modelstool — groups all models by output capability (text / image / audio / file)- Model reference syntax — LLM output can reference

@provider/model@which renders as an inline chip or full model card in the UI - Conversation summarization — older messages are compressed into a rolling summary every 8 turns, keeping token usage low without losing context

Key Technical Decisions

- Proxy billing over estimation — costs are read from actual upstream SSE chunks (

stream_options: { include_usage: true }), eliminating estimation drift - Shared Zod schemas — single source of truth for API validation and TypeScript types across worker, web, and portal

- Hono RPC — end-to-end type safety between worker and frontends without a separate schema registry or code generation

- Edge + D1 — Cloudflare Worker + D1 means the API, database, and CDN cache are all in the same infrastructure layer

- Separate frontend apps —

apps/web(Next.js, public/SEO) andapps/portal(Vite, authenticated dashboard) are deployed independently, keeping public ISR pages fast and the dashboard bundle lean

Tech Stack

Backend: Cloudflare Worker, Hono RPC, TypeScript, Drizzle ORM, Cloudflare D1 (SQLite), Zod

Frontend: Next.js 15 (public), Vite + TanStack Router (portal), React Query, shadcn/ui, Tailwind CSS

Auth: Google OAuth via better-auth — session cookies shared across web and portal via worker-proxied auth endpoints

Payments: MMPay / MMQR (KBZPay, WavePay, AYA Pay, CB Pay)

Infra: Cloudflare (Worker + D1 + CDN cache), Vercel (Next.js)

Role

Solo Full-Stack Developer — end-to-end design and implementation of edge backend, billing engine, LLM proxy, portal, payment integration, AI assistant, and monorepo tooling.